Prime Video: this is how generative AI is deployed in AWS to improve viewing quality

Amazon Bedrock, a fully managed service Amazon Web Services (AWS) to create generative AI applications, backbones the streaming service Prime Video, offering “value and personalized knowledge” to viewers in the process.

The deployment of Generative AI in AWS of Prime Video manifests itself in different fields, starting with a classic: personalized content recommendations. In this regard, Amazon Bedrock is helping to power personalized content recommendations directly on the “Movies” and “TV Shows” pages of Prime Video. Viewers will see collections of “movies we think you'll like” and “TV shows we think you'll like” on each page, curated based on their interests and viewing history.

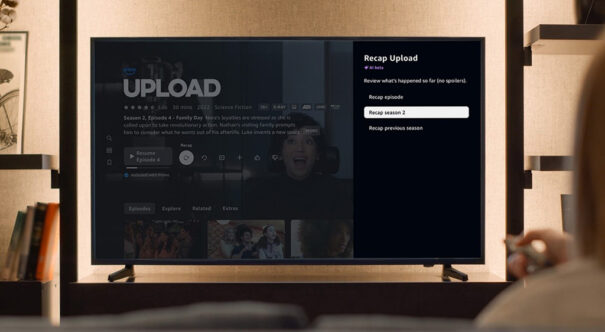

Another key tool is X-Ray Recaps, which help viewers catch up on what they're watching, without the risk of spoilers. This tool creates short and easy to digest summaries of full seasons of television shows, single episodes, and even snippets of episodes through short text snippets, describing major cliffhangers, character-related plot points, and other details that can be accessed at any time while viewing.

Thanks to a combination of base models managed by Amazon Bedrock and custom AI models, trained with Amazon SageMaker, X-Ray Recaps works by analyzing multiple video segments. Combined with subtitles or dialogue, it generates detailed descriptions of events, places, times and key conversations. Additionally, Amazon Bedrock Guardrails are applied to verify that the summaries do not contain spoilers.

Thanks to a combination of base models managed by Amazon Bedrock and custom AI models, trained with Amazon SageMaker, X-Ray Recaps works by analyzing multiple video segments. Combined with subtitles or dialogue, it generates detailed descriptions of events, places, times and key conversations. Additionally, Amazon Bedrock Guardrails are applied to verify that the summaries do not contain spoilers.

In the field of audio, Dialogue Boost Analyzes the original audio of a movie or series and uses AI to intelligently identify points where dialogue may be difficult to hear over background music and effects. The feature then isolates speech patterns and enhances the audio to make dialogue clearer. To enhance Dialogue Boost, Prime Video uses various AWS services, such as AWS Batch con Amazon Elastic Container Registry (Amazon ECR), Amazon Elastic Container Service (Amazon ECS), AWS Fargate, Amazon Simple Storage Service (Amazon S3), Amazon DynamoDB y Amazon CloudWatch, among others. Initially released in English, Dialogue Boost now supports six other languages, including French, Italian, German, Spanish, Portuguese and Hindi.

Improved live sport

Improved live sport

The last seasons of Thursday Night Football (TNF) of the NFL and events NASCAR on Prime Video marked the debut of several new Prime Insights: AI-powered streaming enhancements specifically designed to bring fans closer to the action that were created through a collaboration of Prime Sports producers, engineers, on-air analysts and AI experts with an AWS team using Amazon Bedrock.

Prime Insights ilustra hidden aspects of the game y predicts crucial moments before they happen. For example, TNF's “Defensive Vulnerability” feature is based on a proprietary machine learning model. It uses thousands of data points to analyze defensive and offensive formations and highlight where the offense will, or should, attempt to attack. On the other hand, the “Burn Bar"NASCAR uses an AI model in Amazon Bedrock, combined with live tracking data and telemetry signals from the cars. It analyzes the fuel consumption and efficiency of all the cars in the race, identifying which drivers are saving fuel and which are burning it quickly to reach the finish line and capture the checkered flag.

In addition, Rapid Recap, which is also powered by AWS using Amazon Bedrock, is a feature that helps fans get up to speed quickly after joining an event that is already underway. The tool automatically compiles a complete summary of highlights up to two minutes long.

Improved video understanding

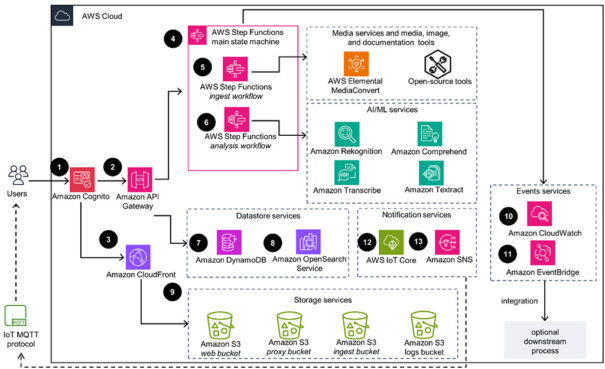

Prime Video marketing assets are stored on disparate systems, with sometimes insufficient metadata. This makes it difficult for teams to effectively discover, track entitlements, check quality control, analyze and monetize their content. Thanks to generative AI, AWS users can better understand your media assets extracting metadata and adding vector inlays, which is known as video understanding.

To fix it, Prime Video started using the guide Media2Cloud in AWS, which offers a Complete media analysis at the frame, shot, scene and audio level. The guide helps enrich asset metadata (such as celebrity, on-screen text, moderation, mood detection, and transcription). Powered by Amazon Bedrock, Amazon Nova, Amazon Rekognition, and Amazon Transcribe, Media2Cloud enables faster, more accurate understanding of video to improve content management, search capabilities, and audience engagement.

Prime Video media asset metadata is automatically sent to an AWS partner, Iconik, for multimedia asset management (MAM). As a result, Prime Video has enriched hundreds of thousands of assets and improved discoverability in its marketing archive.

Did you like this article?

Subscribe to our NEWSLETTER and you won't miss anything.